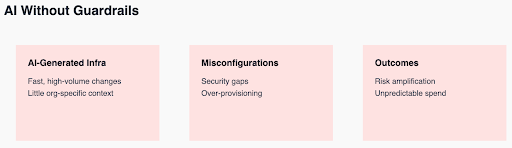

AI has dramatically lowered the friction to create infrastructure. Developers can now generate Kubernetes manifests, Terraform modules, and CI/CD pipelines in seconds. While this acceleration is powerful, it comes with a hidden danger – AI scales mistakes just as efficiently as it scales productivity.

Without guardrails, AI doesn’t just introduce risk, it amplifies it, pushing insecure configurations, fragile architectures, and runaway cloud spend into production faster than platform teams can react.

Why AI-Generated Infrastructure Creates Hidden Risks

According to recent reports, AI is fueling increased cloud complexity and spending. The problem isn’t that AI generates “bad” infrastructure. It’s that AI optimizes for speed and completion, not for organizational standards, security posture, or cost efficiency.

An AI-generated Terraform plan may technically work while silently violating IAM best practices. A Kubernetes deployment may pass functional tests while over-provisioning resources by 10x. When these patterns repeat across teams and environments, the result is a fleet of systems that function but are expensive, brittle, and risky.

How AI Breaks Traditional Platform Engineering Models

This is why AI without governance breaks traditional platform engineering assumptions. Human-in-the-loop reviews cannot keep pace with machine-generated changes. By the time a misconfiguration is detected via CNAPP alerts, CSPM findings, or monthly cost reports, it’s already running in production, already consuming budget, and already expanding the blast radius. The feedback loop is too slow, and platform engineers are left with firefighting symptoms instead of shaping outcomes.

The AI Cloud Cost Problem: Unpredictable Spend at Scale

Cost is often where this failure becomes most visible. AI-generated infrastructure tends to err on the side of “safe” capacity – larger instance sizes, higher replica counts, and permissive autoscaling. Individually, these decisions seem reasonable. At scale, they create unpredictable cloud spend, where budgets drift month over month with no single obvious cause. Platform teams are then asked to explain costs they didn’t explicitly approve, driven by changes they didn’t manually review.

Security and Reliability Risks from AI-Generated Configurations

Security and reliability suffer in parallel. AI-generated configurations may omit network policies, skip pod security constraints, or create overly broad permissions, especially when prompts lack deep organizational context. Because these issues are configuration-based, they often bypass traditional security thinking and instead show up as policy violations, audit findings, or outages caused by subtle misconfigurations. AI accelerates delivery, but without guardrails, it also accelerates drift from intent.

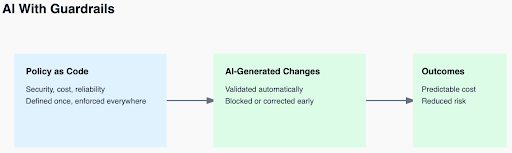

The Solution: AI-Native Platform Engineering with Automated Guardrails

The solution is not to slow AI down, but to surround it with automated guardrails. Policy-as-code gives platform engineers a way to define what “right” looks like – security controls, cost limits, reliability standards, and enforce those rules consistently across pipelines, clusters, and cloud resources. When AI-generated infrastructure is evaluated against these policies automatically, unsafe or wasteful changes are blocked or corrected before they reach production. Governance moves from reactive to preventative.

This is where AI-native platform engineering becomes essential. AI shouldn’t just generate infrastructure, it should also be constrained, guided, and corrected by systems that understand platform intent. With policy-as-code as the foundation and AI agents handling detection, remediation, and optimization, platform teams can harness AI’s speed without inheriting its chaos. The outcome is faster delivery with predictable cost, stronger security, and fewer surprises,, exactly what platform engineering exists to provide.

Moving Forward with AI Infrastructure Generation

Organizations adopting AI for infrastructure generation face a critical decision: implement governance frameworks now or inherit compounding technical debt, security exposure, and cost unpredictability later. AI without guardrails isn’t a productivity tool—it’s an amplified risk multiplier.