This is a republished post from the original work by Deen Aariff.

This is a republished post from the original work by Deen Aariff.

As a student of computer science, I love to work on small projects as a way of introducing myself to new technologies. Microservices, a trend with serious momentum in the software industry was a foreign topic to me, and I wanted a project that would help me to understand the technology and deploy a working prototype on the cloud.

The Pet-Clinic Application, is sample app that was converted from a monolithic to microservices architecture by Maciej Szarlinski. It employs the Spring Cloud Netflix OSS, an open source integration for Spring Boot Apps developed by Netflix to assist in building large scale distributed systems.

I decided that the Pet-Clinic Application would be a great entry point into the world of microservices. In order to accomplish my goal of deploying this application on the cloud, I decided to use a platform offered by Nirmata, a container orchestration startup whose goal is to democratize container management and bring the power of microservices to all enterprises.

As a student, I found Nirmata’s product to be a simple solution to the problem I needed solved: container orchestration on the cloud.

I initially flirted with the idea of using an industry heavyweight platform, like Google’s Kubernetes, but it felt rather complex for someone being introduced to microservices for the first time. After reading through the Nirmata Documentation I realized the easy to use interface would help me meet my goals and better understand the technologies involved.

In my pair of posts, I will cover what I have learned about microservices and explore how they work with Spring Cloud Netflix OSS in the context of the Pet-Clinic application. Additionally, I will explain how Nirmata allows us to deploy the application easily on the cloud with a few added benefits.

In Part 1, we will first explore some introductory concepts in the world of microservices. If you feel comfortable with microservice architectures, you can skip directly to where we will deploy the Pet-Clinic Application locally.

In Part 2, we’ll use Nirmata to deploy and monitor each of our microservices. By the end of the post, we’ll be able to access our application in the cloud. We’ll also explore some great features that are available in Nirmata, such as automatic health checks of our services.

A Brief Introduction to Microservices

In short, a microservice is an application developed to address a singular enterprise capability; multiple microservices can work in tandem in order to provide a whole suite of capabilities to a consumer.

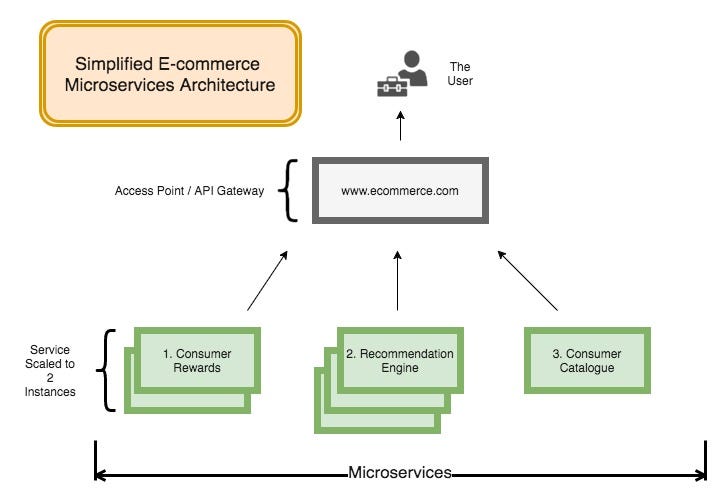

One real world example by DJ Spiess that really clicked with me is that of a typical e-commerce platform (think Amazon, Kohl’s, Walmart, etc..) that is using a microservices architecture.

Such online retailers often provide numerous capabilities to their users such as the ability to browse their catalogue, access a consumer-rewards program, and utilize a recommendation engine that engages users’ with their preferences.

Each one of these capabilities can be provided by a micro-service who instances can be independently scaled. All of these capabilities are then typically abstracted away into a single point of consumption (a url, etc.), and then provided to the consumer.

Created at https://www.draw.io/

But why actually use microservices in the first place? Here are a few benefits.

- Microservices are scalable. Microservices offer the ability to scale up the instances of different services independently whenever performance requirements rise. The result involves not having to scale up the entire application to meet certain performance demands.

- Microservices enable agility. Devops teams working with microservice architectures can easily employ continuous integration techniques in updating individual services without compromising the entire architecture. Furthermore, locating issues unique to a service becomes simplified.

- Microservices better leverage of the power of containers. Container technology, such as Docker, is awesome through the means it speeds up the Devops and continuous integration processes; Microservices allow utilize the power of containers in a distributed fashion.

However, like all technologies microservice architectures come with downsides. Due to their distributed nature, they pose unique security challenges for teams and often require large development teams to establish the infrastructure required to even attempt a microservices architecture. However, platforms such as Nirmata, seek to make the latter less of an issue for medium and small enterprises.

Microservices with Spring Cloud Netflix OSS

The two concepts that come into play when dealing with Microservices and Distributed Systems in general are API Gateways and Service Discovery. Spring Cloud Netflix seeks to address these with the Zuul Proxy Server and Eureka Server, respectively.

Traditionally, enterprises want a good deal of abstraction for their microservices when presenting overall capabilities to a consumer. One way to approach this is through an API Gateway (Zuul Proxy Server) that is able to communicate with each of your microservices. The API Gateway can fetch all the appropriate information needed from the appropriate microservice whenever your user requests it.

But where does the API Gateway Request the information? This is where Service Discovery factors in.

Service Discovery is the means by which an application’s microservices “discover” and communicate with one another. A Discovery Server tracks all of your microservices’ essential information (Host Address, Host Port, etc.). Microservices can then “consult” the discovery server when they need to communicate with another microservice.

Netflix’s name for the discovery server in Spring Cloud Netflix OSS is a Eureka Server. Each microservice that wishes to register with the Eureka Server is a Eureka Client. Therefore, in order to speak with all other services, the Zuul Proxy Server itself must also be a Eureka Client.

This idea will come into play when we must specify the dependencies of our microservices.

The Microservices in the Pet-Clinic Application

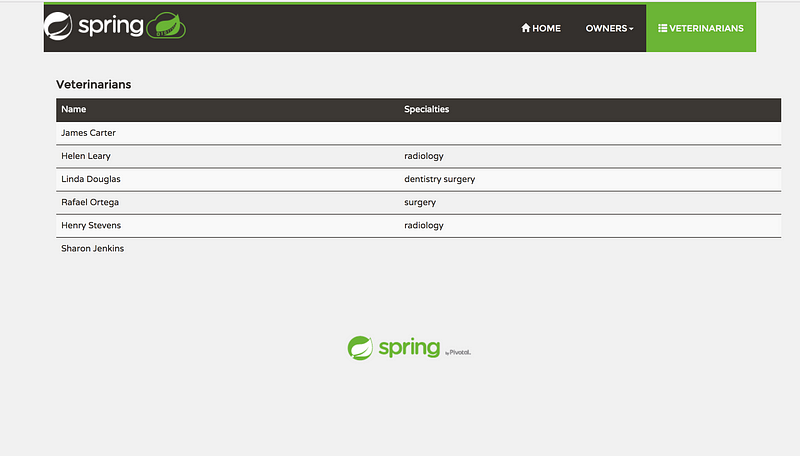

The Pet-Clinic Application uses a number of microservices, but for the purpose of this blog post, we’ll just focus on deploying six: the Configuration, Discovery (the Eureka Server), API Gateway (Zuul Proxy Server), Vet, Visit, and Customers services.

The Configuration Server/Service is the point of first contact for all of our different microservices. It serves as a centralized location each microservices to obtain its unique configuration information. The configuration server fetches information from a GitHub repository; this information supplies what hostname and port the service should reached at and how to contact the Eureka Server.

The Discovery Server is what enables Service-Discovery in the Pet-Clinic application. This is what registers all of the instances of our different microservices and keeps track of how to reach them.

The remaining microservices will serve as Eureka Clients. After obtaining their configurations from the configuration server, they’ll attempt to register and receive an acknowledgement from the the Discovery Server.

Dependencies Required

In order to deploy the Pet-Clinic application locally you’ll want first want to have a few dependencies installed.

- Java v.1.8 or greater

- Maven v.2.0 or greater — A build tool

- Docker v.1.2 or greater

- Docker-compose — A tool for orchestrating microservices

Getting Started with the Pet-Clinic Application

In order to get a picture of how the entire application comes together, we will first deploy the application locally on our personal machine. As a side note, make sure that you are running the Docker Daemon before proceeding.

First clone the repository to your local machine, then navigate to the root directory of the repository.

Building your containers and running them are fairly straightforward.

To build your containers you’ll by using Maven, so make sure you have it installed. To build run the command:

mvn clean install -PbuildDocker

To actually run your containers, you’ll be using docker-compose which references the file docker-compose.yml in the root directory. This file provides a coordination the start up of our microservices and ensures that certain microservices with a large number of dependencies, such as the config server, are run first.

To start up your microservices run:

docker-compose up

Voila. After usually a minutes wait (each service dependent on the discovery-server runs a 60 second wait-for-it script), you’ll be able to access the Eureka server at http://localhost:8761 (or port 8761 on your virtual machine running Docker).

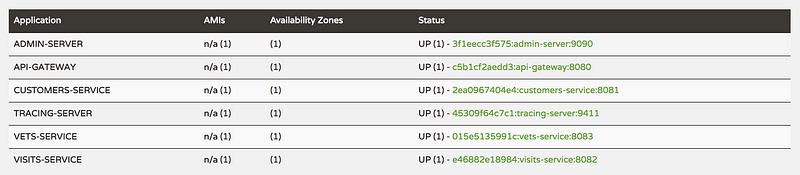

Lets’ first visit our Eureka Server to see dashboard with links to all of our different microservices that are running.

Note that we have a couple of extra micro-services that are running here. However, we won’t be deploying them on the cloud.

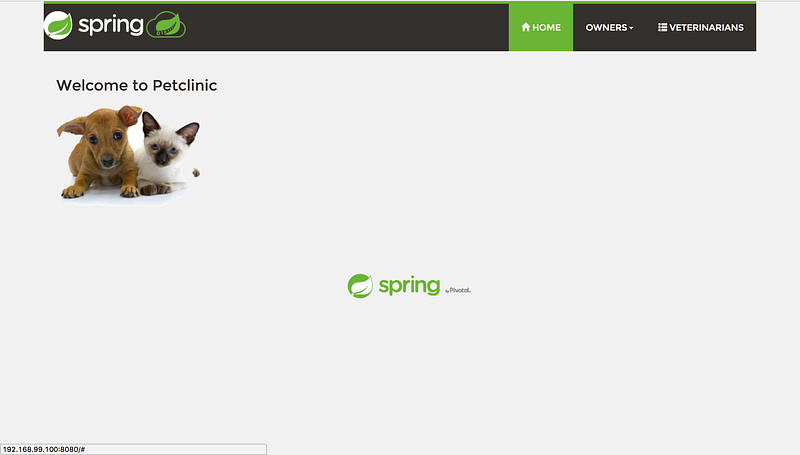

You should then be able to visit your api-gateway http://localhost:8080 (or port 808o your virtual machine running Docker).

From here you can use the api-gateway to fetch information from the vets, visitors, and customers services.

Putting Your Containers on Docker Hub

You’ve got your local application up and running — that’s awesome! But now you want to deploy it on the cloud. That’s where Nirmata comes in.

In order to put our containers on Nirmata however, we’ll need to store an image of the containers in a repository for Nirmata to fetch. While it’s an extra step, it makes continuously integrating new versions of our services to Nirmata all the much easier.

The good news is that building our application creates images of docker containers that we can easily push to the Docker Hub Repository.

So before proceeding, make sure that you have an account on Docker Hub.

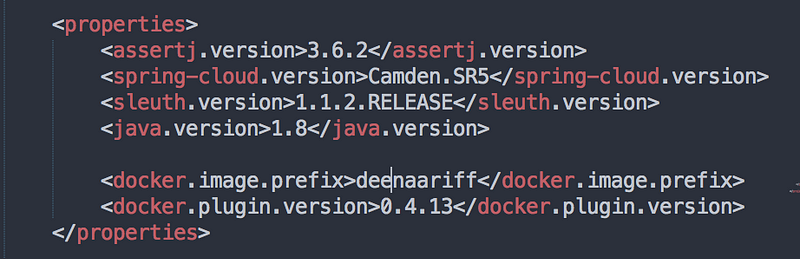

Next, we’ll want to modify the repository username, so that we can push to our own repositories. To do this open up the pom.xml file in the root directory in your favorite text editor.

Under “properties” change the “docker.image.prefix” to your Docker Hub username. For example, mine is “deenaariff”.

After building your containers again, you can find out how to push your container images to DockerHub here. Or, if you like, you can use this bash script to help me rebuild and push them all at once.

Concluding Thoughts for Part 1

Thus far, we’ve understood how to deploy our application on our local machines. It’s great way to wrap your head around how the concept of microservices will work once we put them on the cloud.

In the next, section of this post, we’re going to be modifying our container images before actually deploying them to Nirmata. To do this we’ll get our hands dirty by writing some code, but as we will find out later, the services that Nirmata offers makes this a minimal effort.

Thanks for reading this far! I hope I’ve been able to communicate my excitement for this technology and its capabilities. We’ve gotten of to a great start and it’s all upwards from here. Click here for Part 2.

Should you like to try Nirmata for 30-days, please feel free to sign-up below.

Sorry, the comment form is closed at this time.